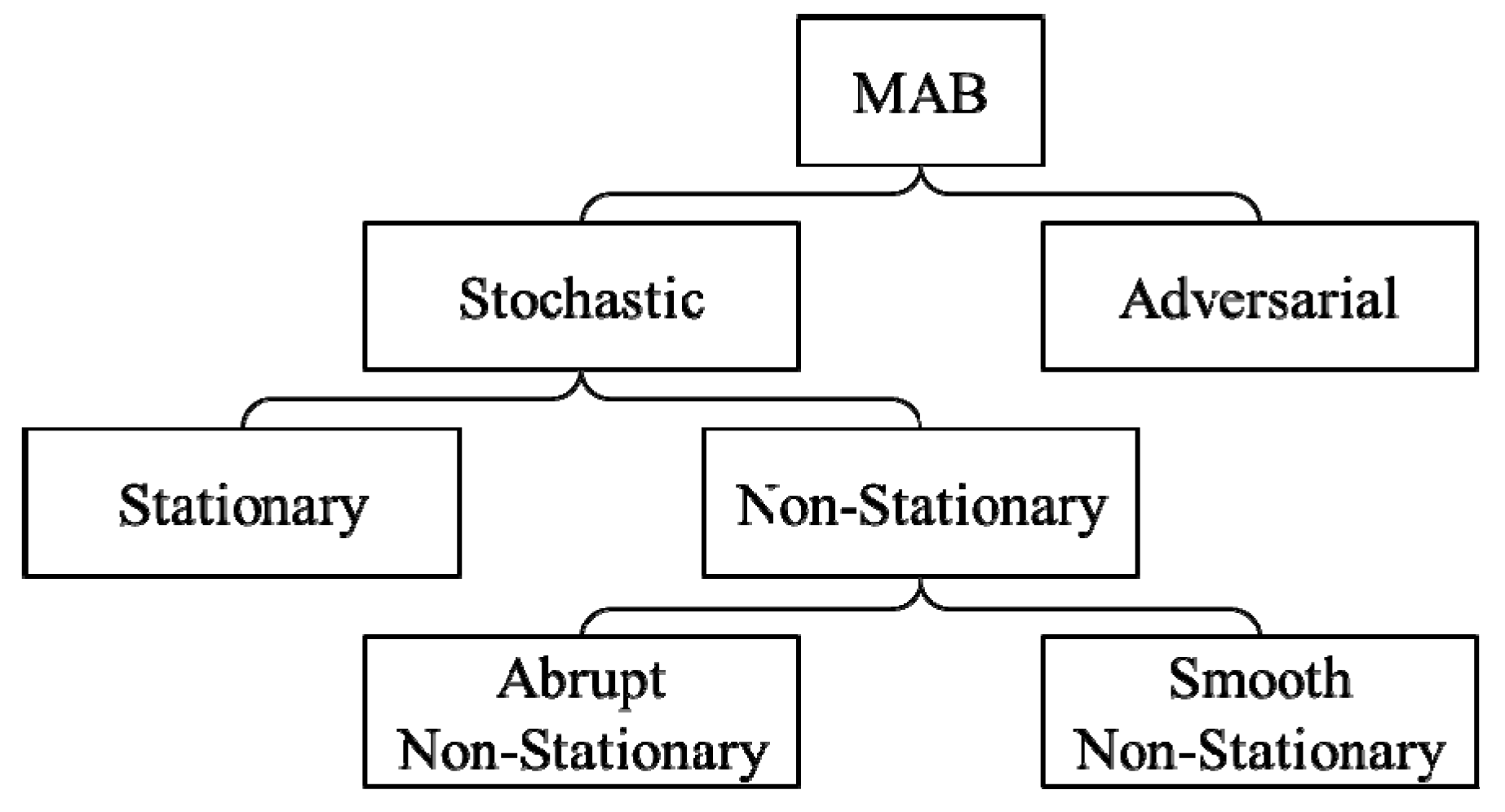

They assumed that the agents could know all information of other agents and obtained a socially optimal experiment (learning) strategy. Because rMAB is simple in structure and its generality, we believe that it is an appropriate framework to consider Rogers’ paradox.Īs a model for social-learning collectives, Bolton and Harris studied an agent system in a multi-armed bandit 7. Agents maximise their payoffs by exploiting an arm, searching for a good arm at random (individual learning), or copying an arm exploited by other agents (social learning). The term “restless” means that the payoffs change randomly. We call an arm with a high payoff a good arm. An rMAB is analogous to the “one-armed bandit” slot machine but with multiple “arms”, each with a distinct payoff. The objective of this study is to analyse equilibrium social learning in an rMAB. In this study, we propose a stochastic model to solve Rogers’ paradox in the framework of a restless multiarmed bandit (rMAB) used in that tournament. 6, discountmachine that did the most effective social learning won over the other strategies that combined individual learning and social learning. For example, in a famous tournament by Rendell et al. It indicates that each member effectively learns good-quality information provided by other members. The concept of adaptive information filtering 3, 6 has been proposed as key to the effective working of social learning. 4 studied the relative merits of several learning strategies by using a spatially explicit stochastic model. They discussed using rate equations and succeeded in solving the paradox. 3 advocated a learning form called critical social learning, which is social learning supplemented by individual learning. Further, on analysing two models where social learning reduces individual-learning costs and improves the information obtained through the latter, they concluded that social learning can be adaptive. Boyd and Richerson 2 pointed out that Rogers’ paradox is not a paradox when the only benefit of social learning is to avoid learning costs. Several attempts have been made to solve Rogers’ paradox in social learning. Rogers’ conclusion seems very strange in light of our experience 4. Therefore, Rogers’ finding that social learning is not necessarily more advantageous than individual learning is counterintuitive 5. In other words, individual learning costs more than social learning does 2, 3, 4. Without social learning everybody would have to learn everything for themselves 2. Social learning-learning from the experience of others- is advantageous compared to individual learning 2, 3, 4. One of the differences between human beings and other animals is that the former transfer their predecessors’ experience and wisdom in the form of knowledge 1.

The ESS Nash equilibrium is a solution to Rogers’ paradox. It is shown that the fitness of an agent with ESS is superior to that of an asocial learner when the success probability of social learning is greater than a threshold determined from the probability of success of individual learning, the probability of change of state of the rMAB, and the number of agents. In this model, we explicitly construct the unique Nash equilibrium state and show that the corresponding strategy for each agent is an evolutionarily stable strategy (ESS) in the sense of Thomas. Fitness of an agent is the probability to know the good arm in the steady state of the agent system. Each agent stochastically selects one of the two methods, random search (individual learning) or copying information from other agents (social learning), using which he/she seeks the good arm.

The bandit has one good arm that changes to a bad one with a certain probability.

We study a simple model for social-learning agents in a restless multiarmed bandit (rMAB).